If the lag exceeds the configured ., Splunk UBA shows an alert in the health monitor. For example, if the first batch query ends at 1:00 PM and 59 seconds, and Kafka ingestion starts at 1:02 PM and 10 seconds, then the lag at that time is 1 minute and 11 seconds. The lag, or amount of time between the end time of the most recent batch query and the time Kafka ingestion starts. Setting this property for an individual data source overrides the value of the .conds property. For example, to configure an interval of 120 seconds for a data source named exampledatasource, use the following property and value setting: You can configure the query interval for any individual data source by adding the data source name to the end of the property. The default is 60 seconds, meaning that each query searches for 60 seconds worth of events, starting from the time defined by .conds.ĭo not configure the interval to exceed 4 minutes. The length of the time in seconds for each batch query. For example, to configure delay of 120 seconds for a data source named exampledatasource, use the following property and value setting: You can configure the data ingestion start time for any individual data source by adding the data source name to the end of the property. The query runs on the events within the specified interval of time defined by .conds.ĭo not configure this property to exceed 10800 seconds (3 hours). Specifying a delay of 120 seconds means that the first batch query begins processing events at 1:00 PM. For example, if Kafka ingestion is enabled at 10 seconds past 1:02 PM, then the beginning of the minute is 1:02 PM. The default is 180 seconds (3 minutes) earlier than the start of the current minute. The point in time where Splunk UBA begins Kafka ingestion. These properties apply globally to all data sources sent to Kafka for ingestion, including any data sources that you may have configured earlier with different properties. Synchronize the cluster and restart Splunk UBA to make the configuration changes take effect.See the table for the property names, descriptions, and default values. Modify or add the properties to the /etc/caspida/local/conf/uba-site.properties file.

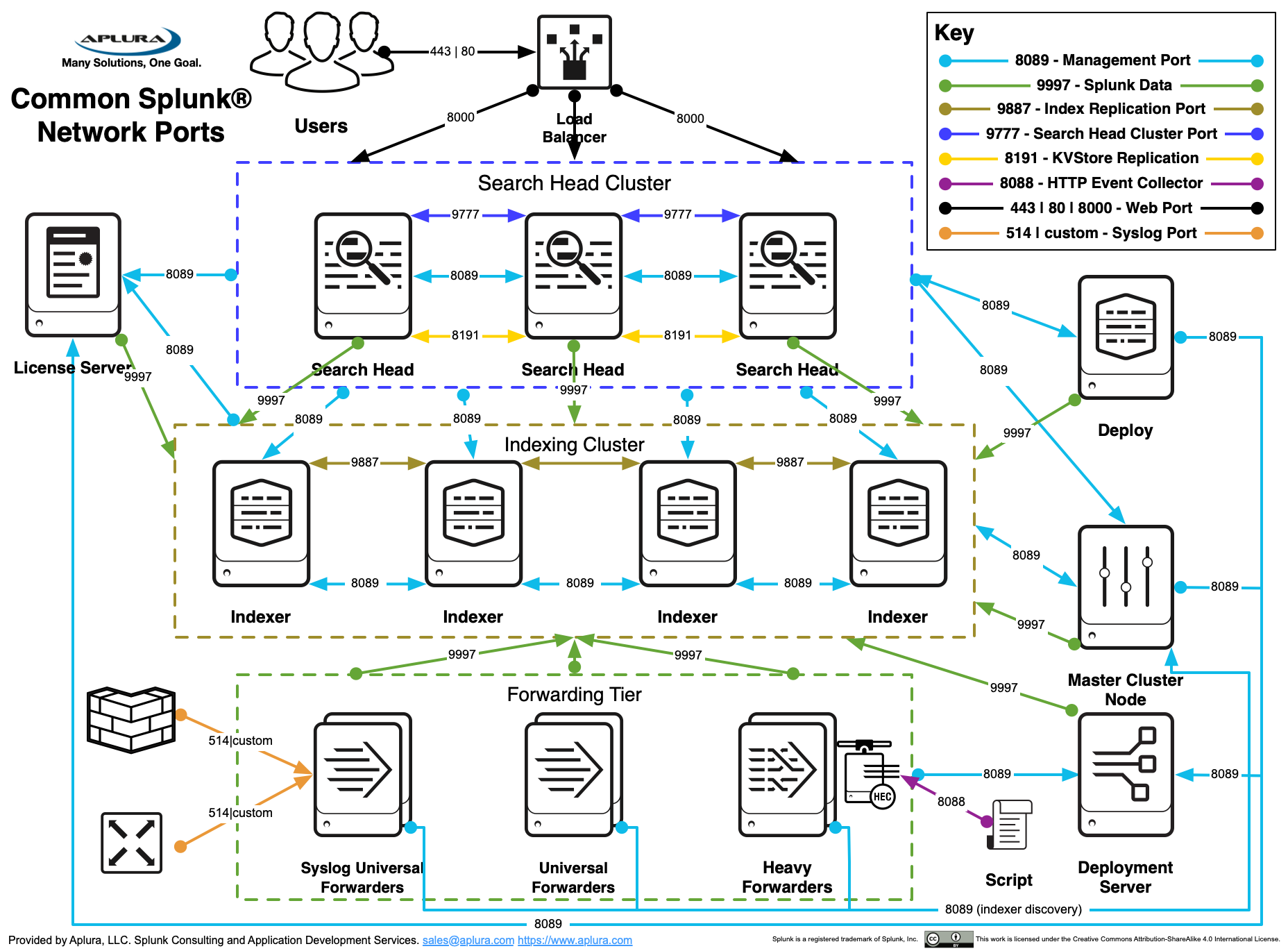

Perform the following steps to configure the Kafka ingestion properties: In such cases, you can adjust the Kafka ingestion properties to make sure that the data is still ingested by Splunk UBA. Some data sources are known to have a lag when ingested into the Splunk platform, such as batch files that are ingested periodically. See How data gets in to Splunk UBA in Get Data into Splunk User Behavior Analytics for information about ingesting data sources not using Kafka. Running real-time indexed searches on Splunk Enterprise is not required. It also allows companies to scale up their data analysis operations as their needs get larger.Kafka ingestion works by issuing multiple micro-batch queries with consecutive time ranges connected to each other against live data from Splunk Enterprise. This gives users the opportunity to better visualize the data that they are creating and what it means for their business. Splunk makes considerable use of AI and machine learning to deliver more intelligent results to a Splunk query. Produced data and possible formats are extremely unpredictable. Wide divergence in the technology systems generating it, which can include networks, sensors, applications, devices, and servers. There are a few reasons why machine data makes processing and analysis difficult: The Splunk API, which has a web-style interface to input a Splunk query, allows data users to search, monitor, and analyze machine-produced big data. With the huge increases in machine data being produced by the IoT (Internet of Things) and IT infrastructure, this makes it an important contributor to the field. Splunk’s mission statement is “to make machine data accessible, usable and valuable to everyone”. Splunk is specifically created for dealing with the kind of log files that machines create and making them human readable. It is especially useful for companies who have a number of sources of data which need processing and analyzing simultaneously, to produce results in real-time. Splunk’s query language is mainly used for parsing log files and extracting reference information from machine-produced data.

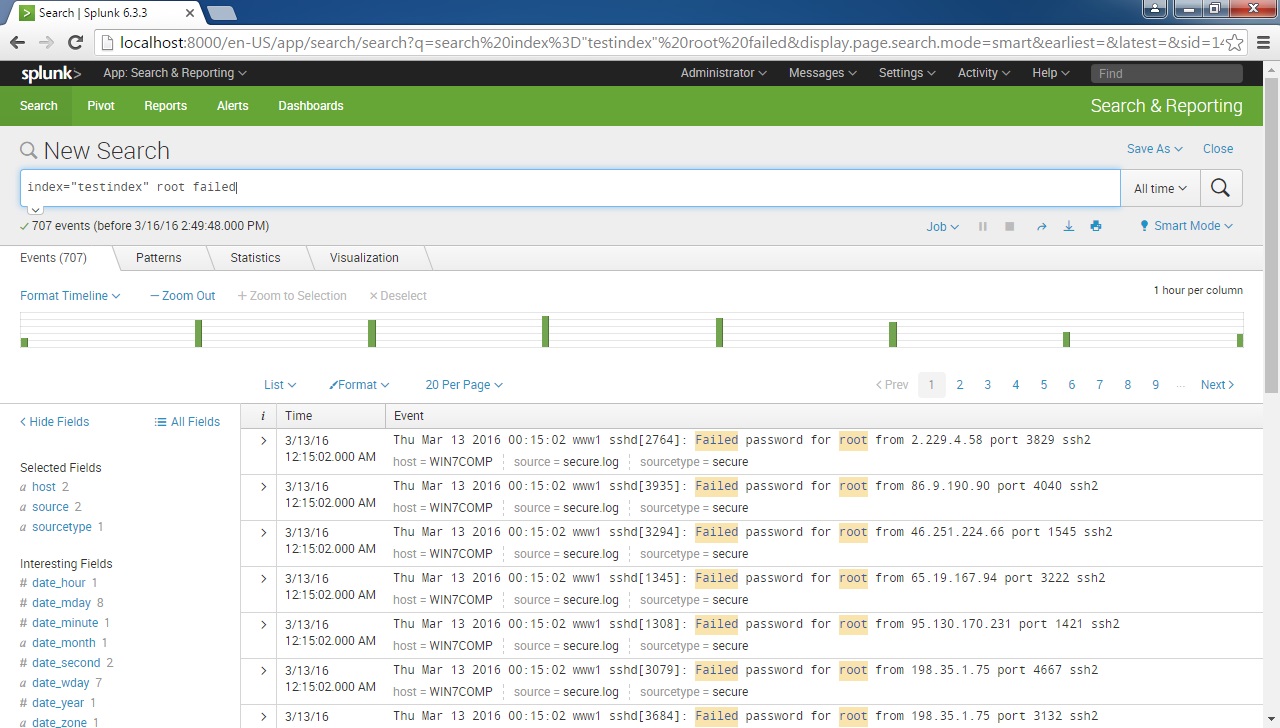

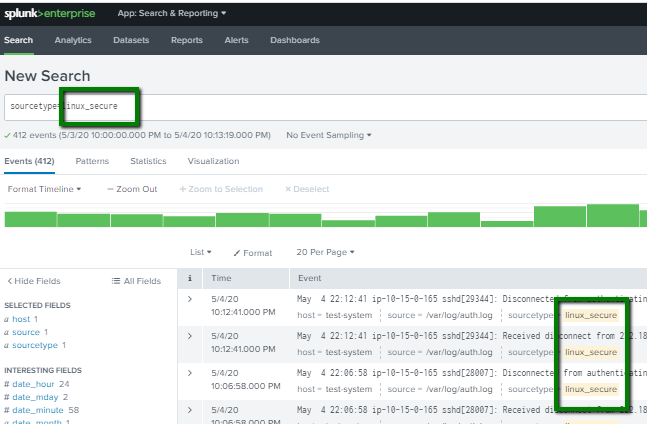

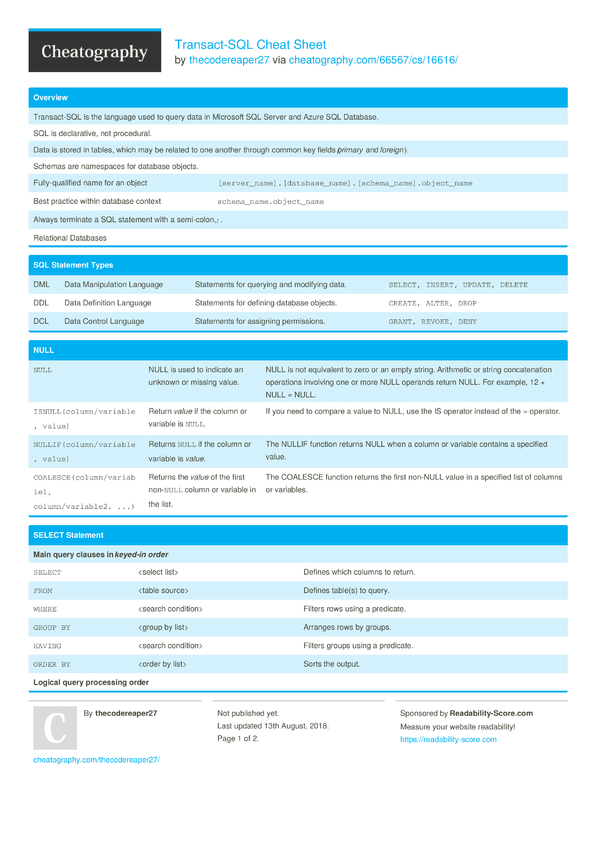

It can be compared to SQL in that it is used for updating, querying, and transforming the data in databases. This allows data users to perform analysis of their data by querying it. A Splunk query uses the software’s Search Processing Language to communicate with a database or source of data. A Splunk query is used to run a specific operation within the Splunk software.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed